3.6 Model Deployment

Momentum provides a 1-click deployment of ML models to MLOps. Before deploying a model to MLOps, make sure that Momentum is properly configured to locate the MLOps deployment.

Check Settings and Configuration and ensure the following properties are configured correctly:

| Parameters | Example Values | Explanation |

| MLOPS_URL | https://host1.momentum.local:9443 | Use internal hostname or IP and not public domain/IP. Contact your admin to obtain the hostname where MLOps is hosted. Momentum internally communicates with MLOps using this host and port and therefore for security reason, use an internal host:port. |

| MLOPS_PUBLIC_URL | http://<public-ip-or-domain>:9443 | This is public hostname and port to access the MLOps UI and securely login to access its services. |

| MLOPS_TOKEN | See the MLOps Token How-tos | This is a security token that is used to communicate with MLOps for security. |

| MLOPS_CLUSTER | default_cluster | MLOps can seamlessly deploy models in various environment such as QA, sandbox, staging, production that may be either local server, Azure, AWS or any other cloud service providers. The deafult_cluster means the model will be hosted within the same machine where MLOps is deployed. |

To deploy a model from Momentum to MLOps:

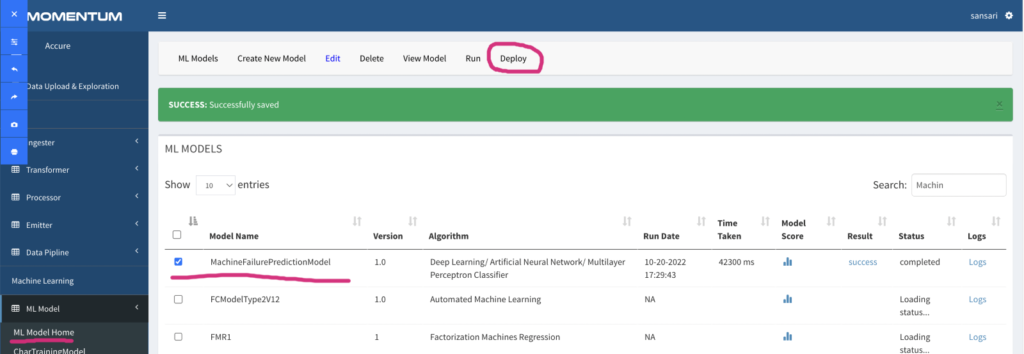

From the ML Model home page, select the model you wish to deploy to MLOps, and click “Deploy” button located at the top menu bar.

The status message will indicate if the model is successfully deployed. Figure 3.7 shows the deployment screen.

Figure 3.7: Screen showing model deployment. Notice the red circle showing “Deploy” button.

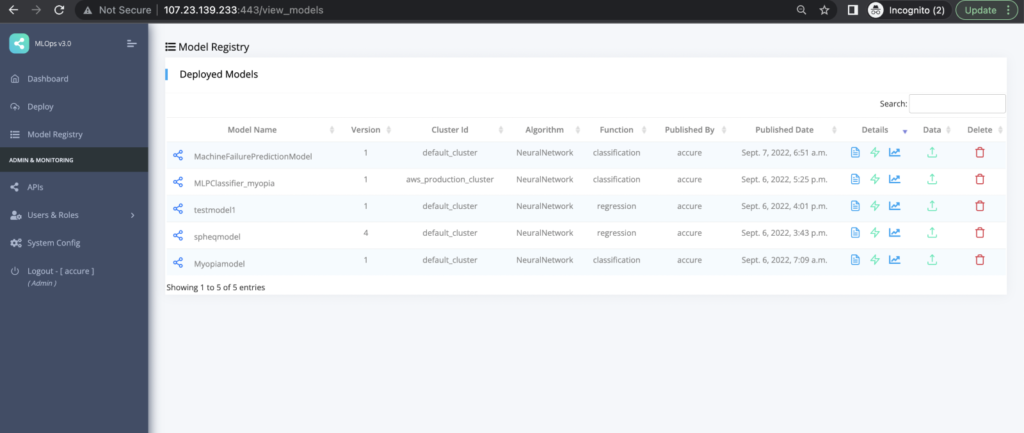

Login to MLOps to check in the model registry if the model just deployed is listed there. Figure 3.8 shows a screenshot showing our model in the registry.

Figure 3.8: Model registry of MLOps showing the deployed models and versions.

Checkout the following for MLOps details:

Creating MLOps Security Token

Checking Model Metadata and Prediction API

Checking Model Quality Report

Checking Data Drift Report

Checking Model Operating Report